By now, you’ve probably heard about the SaaSpocalypse. You used to have to weigh the cost of buying or adopting something that wasn’t exactly to spec against the cost of building it yourself. While I think the threat to the software industry has been exaggerated, generative AI has dramatically shifted the balance between these options in favor of building it yourself.

One thing that made me uncomfortable when I started seriously using agentic AI was how arbitrary it felt. The state of my project was described by a random set of Markdown files — not a good fit for my programmer OCD. I worried about making the wrong choices, how sustainable they would be in the long term as my project grew, and how wasteful it was for the agent to constantly parse them for meaning.

Luckily, I had recently read Steve Yegge’s article about the development of Beads. His core insight was to keep track of everything you do and every decision you make in a database. When it’s time to work on a task, you can use the database to immediately construct the context the agent needs, instead of having it sift through a global set of Markdown files.

I was now in a situation where I had to weigh the option of using Beads versus just building it “myself.” The prospect of installing a vibe-coded application consisting of tens of thousands of lines of code didn’t feel particularly attractive compared to creating an application mapped exactly to my thought process. Enter Shelby, my own minimal take on Beads.

- The project is a single, self-contained executable that I can drop into any project where I want better agent memory.

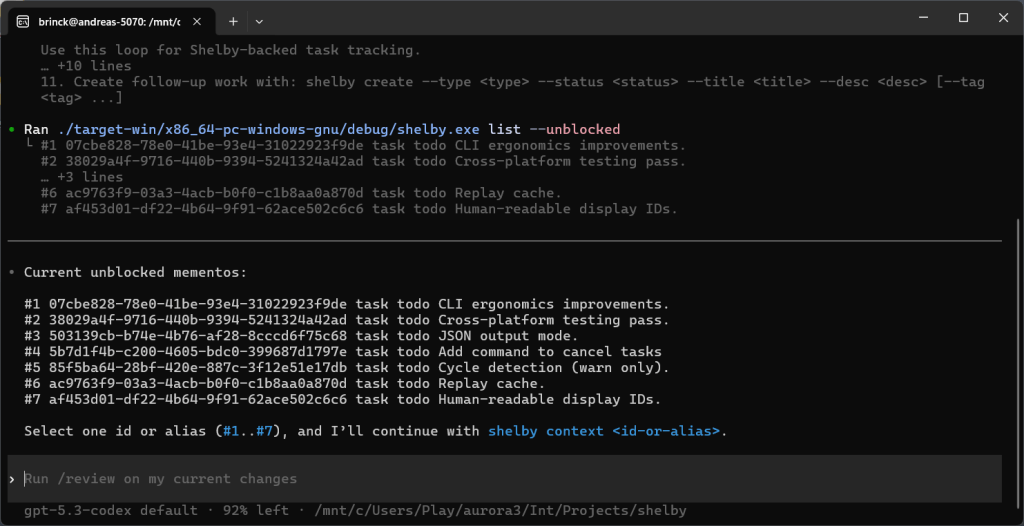

- On first use, the agent is asked to run

shelby help agent, which presents information on how to set up the development loop inAGENTS.mdgoing forward. - Fundamentally, the structure is the same as Beads. Data is recorded in an append-only JSONL event log stored directly in the repository. I call these records mementos. All Shelby commands start by replaying the log to reconstruct the current state.

- Once a task has been selected,

shelby context <id-or-alias>is used to provide the agent with the necessary context. - I’m not using this yet, but Shelby is designed to work with multiple parallel agents.

- I’m now using Shelby to develop Shelby

If we ignore the fact that Shelby is only necessary because agentic AI coding became available, I could have built a tool like this manually. I’ve avoided that kind of thing in the past, though, since when I’m working on a home project I try to keep my eyes on the ball and focus on the task I’m interested in, rather than getting pulled into low-value rabbit holes. In numbers, this is what it took to put Shelby together:

- 7 evenings

- 5168 lines of Rust code

- 120 unit tests

- 60 integration tests

As I’ve worked more with the agents, I’ve started to embrace the arbitrariness of the work and recognize it as the beauty of the model. These agents are general reasoning machines and can work with whatever tools you provide them. I believe that eventually a few somewhat standardized schools of thought will emerge around how to best work with these agents, but I don’t see why Anthropic, OpenAI, or any of the other model makers would want to put their weight behind any particular workflow. Investing in companies that try to sell workflows like these also seems like a good way to quickly lose a lot of money. These companies are rent seekers, and I think they’ll eventually be squeezed out of the market.

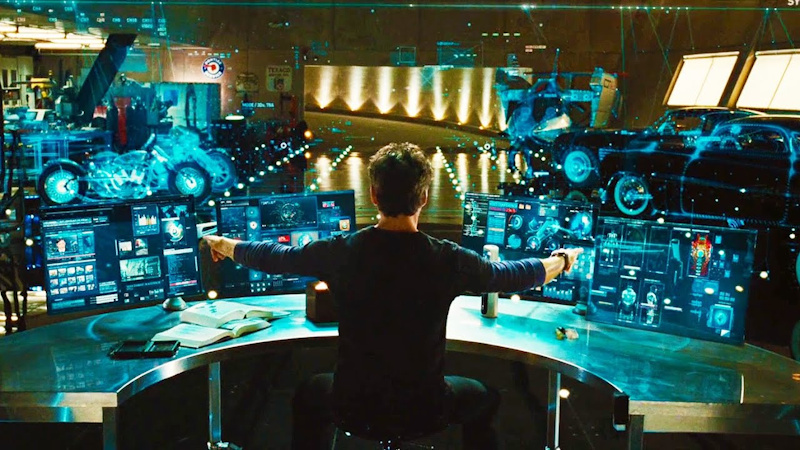

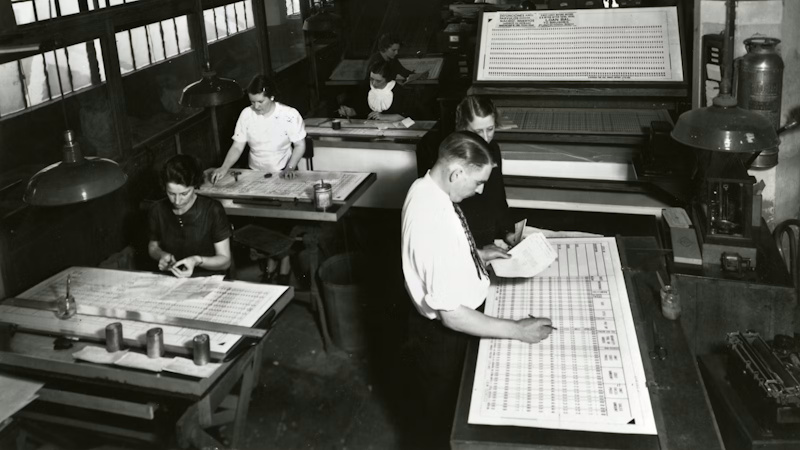

One final reflection on building Shelby. A lot of people have commented on how addictive this kind of vibe coding is. I personally don’t find it particularly enjoyable compared to regular programming. I miss the immediacy of writing the code myself and being in the zone. Most of my time last week was spent sitting around waiting for the machine to complete its tasks. It feels less like Tony Stark working with JARVIS in Iron Man and more like being in the forties, waiting for a batch of punch cards to finish.

Image Credit: Iron Man (2008), © Marvel Studios

Image Credit: IBM History (ibm.com/history/punched-card) © International Business Machines Corporation.

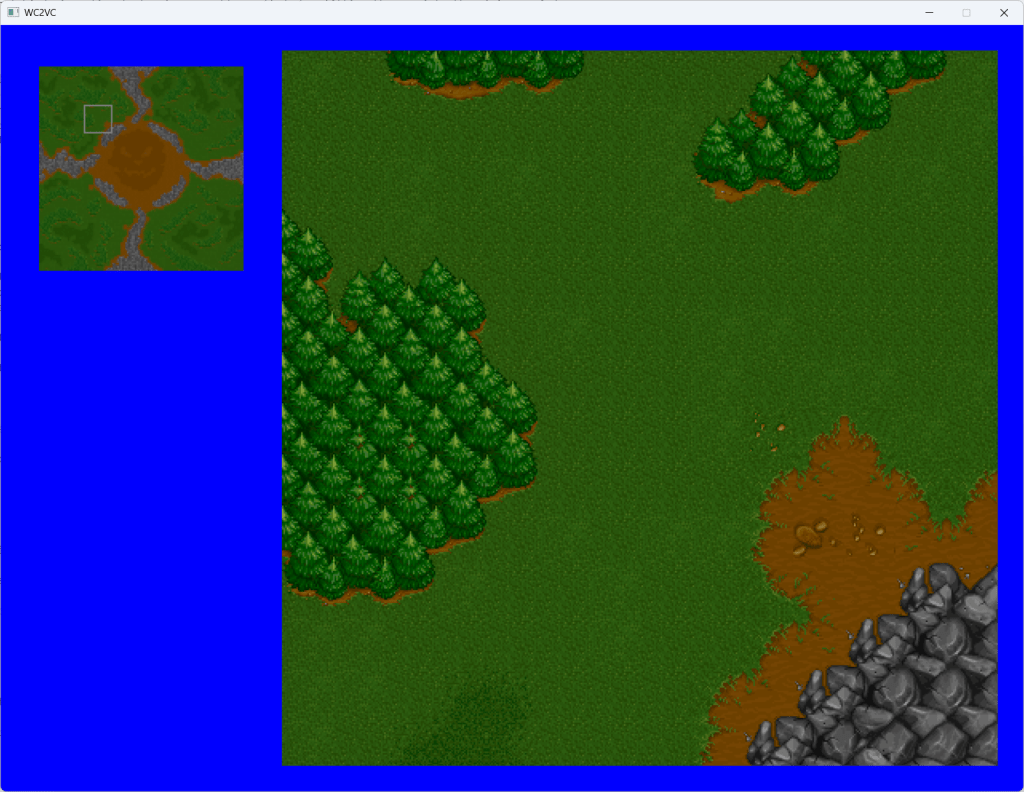

At this point the models are good enough that the most meaningful improvement would be speed. While we’re waiting for this “single-threaded” performance to improve, many developers are working around it by setting up their own “multi-threading” in the form of agent-orchestrator tooling. To continue my learning journey, this is the next step for me as well. I’m going to continue using my vibe-coded Warcraft II project as the vehicle to try out these ideas. Oh, and about that — did I mention that I fixed the borked map loading?

Leave a comment